Proof of stake continues to be one of the controversial discussions within the cryptocurrency house. Though the concept has many plain advantages, together with effectivity, a bigger safety margin and future-proof immunity to {hardware} centralization issues, proof of stake algorithms are usually considerably extra advanced than proof of work-based alternate options, and there’s a great amount of skepticism that proof of stake can work in any respect, significantly with regard to the supposedly elementary “nothing at stake” drawback. Because it seems, nevertheless, the issues are solvable, and one could make a rigorous argument that proof of stake, with all its advantages, will be made to achieve success – however at a reasonable value. The aim of this submit shall be to elucidate precisely what this value is, and the way its influence will be minimized.

Financial Units and Nothing at Stake

First, an introduction. The aim of a consensus algorithm, basically, is to permit for the safe updating of a state in response to some particular state transition guidelines, the place the proper to carry out the state transitions is distributed amongst some financial set. An financial set is a set of customers which will be given the proper to collectively carry out transitions through some algorithm, and the essential property that the financial set used for consensus must have is that it should be securely decentralized – which means that no single actor, or colluding set of actors, can take up the vast majority of the set, even when the actor has a reasonably large quantity of capital and monetary incentive. To this point, we all know of three securely decentralized financial units, and every financial set corresponds to a set of consensus algorithms:

- House owners of computing energy: commonplace proof of labor, or TaPoW. Be aware that this is available in specialised {hardware}, and (hopefully) general-purpose {hardware} variants.

- Stakeholders: all the many variants of proof of stake

- A consumer’s social community: Ripple/Stellar-style consensus

Be aware that there have been some current makes an attempt to develop consensus algorithms primarily based on conventional Byzantine fault tolerance concept; nevertheless, all such approaches are primarily based on an M-of-N safety mannequin, and the idea of “Byzantine fault tolerance” by itself nonetheless leaves open the query of which set the N must be sampled from. Generally, the set used is stakeholders, so we’ll deal with such neo-BFT paradigms are merely being intelligent subcategories of “proof of stake”.

Proof of labor has a pleasant property that makes it a lot less complicated to design efficient algorithms for it: participation within the financial set requires the consumption of a useful resource exterior to the system. Which means that, when contributing one’s work to the blockchain, a miner should make the selection of which of all doable forks to contribute to (or whether or not to attempt to begin a brand new fork), and the totally different choices are mutually unique. Double-voting, together with double-voting the place the second vote is made a few years after the primary, is unprofitablem because it requires you to separate your mining energy among the many totally different votes; the dominant technique is all the time to place your mining energy completely on the fork that you simply assume is most definitely to win.

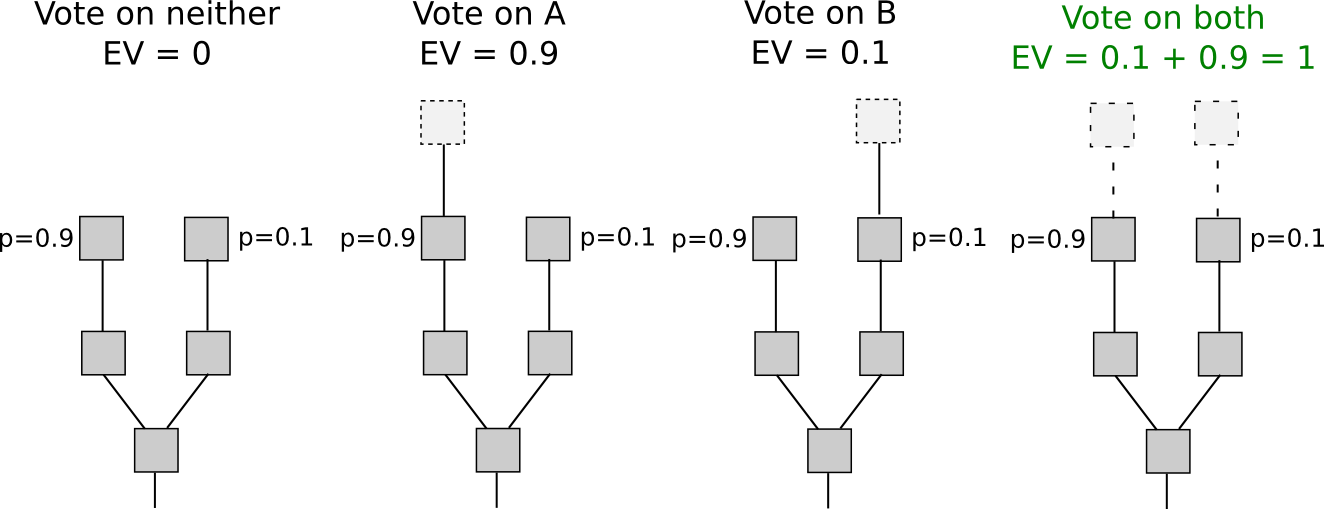

With proof of stake, nevertheless, the scenario is totally different. Though inclusion into the financial set could also be pricey (though as we’ll see it not all the time is), voting is free. Which means that “naive proof of stake” algorithms, which merely attempt to copy proof of labor by making each coin a “simulated mining rig” with a sure probability per second of constructing the account that owns it usable for signing a block, have a deadly flaw: if there are a number of forks, the optimum technique is to vote on all forks without delay. That is the core of “nothing at stake”.

Be aware that there’s one argument for why it won’t make sense for a consumer to vote on one fork in a proof-of-stake setting: “altruism-prime”. Altruism-prime is basically the mixture of precise altruism (on the a part of customers or software program builders), expressed each as a direct concern for the welfare of others and the community and a psychological ethical disincentive in opposition to doing one thing that’s clearly evil (double-voting), in addition to the “pretend altruism” that happens as a result of holders of cash have a want to not see the worth of their cash go down.

Sadly, altruism-prime can’t be relied on completely, as a result of the worth of cash arising from protocol integrity is a public good and can thus be undersupplied (eg. if there are 1000 stakeholders, and every of their exercise has a 1% probability of being “pivotal” in contributing to a profitable assault that can knock coin worth right down to zero, then every stakeholder will settle for a bribe equal to just one% of their holdings). Within the case of a distribution equal to the Ethereum genesis block, relying on the way you estimate the likelihood of every consumer being pivotal, the required amount of bribes could be equal to someplace between 0.3% and eight.6% of complete stake (and even much less if an assault is nonfatal to the forex). Nonetheless, altruism-prime remains to be an essential idea that algorithm designers ought to bear in mind, in order to take maximal benefit of in case it really works nicely.

Quick and Lengthy Vary

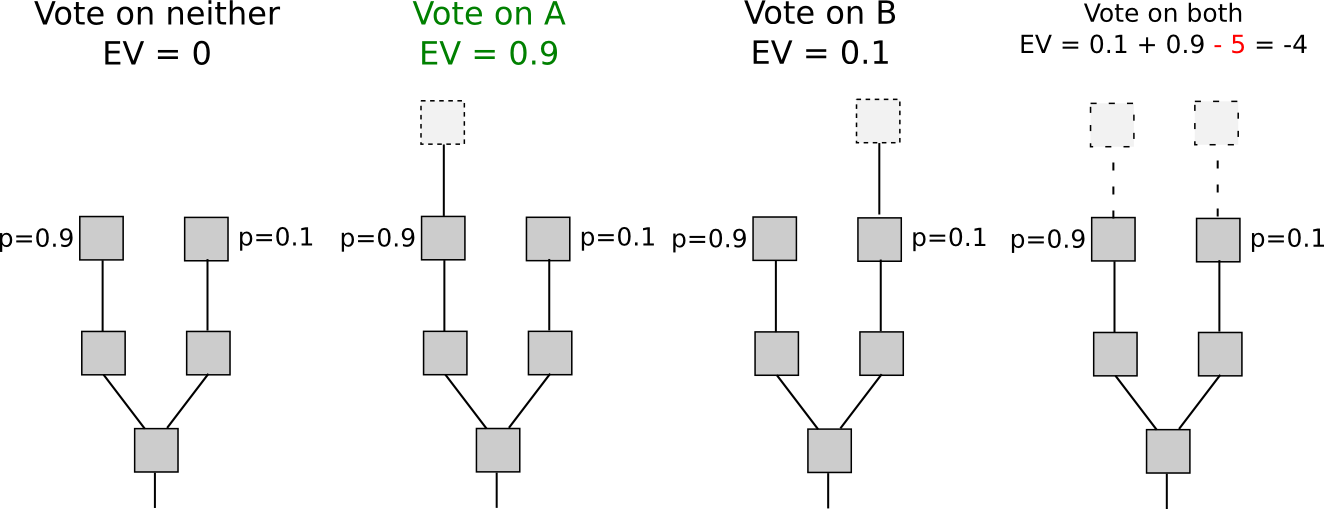

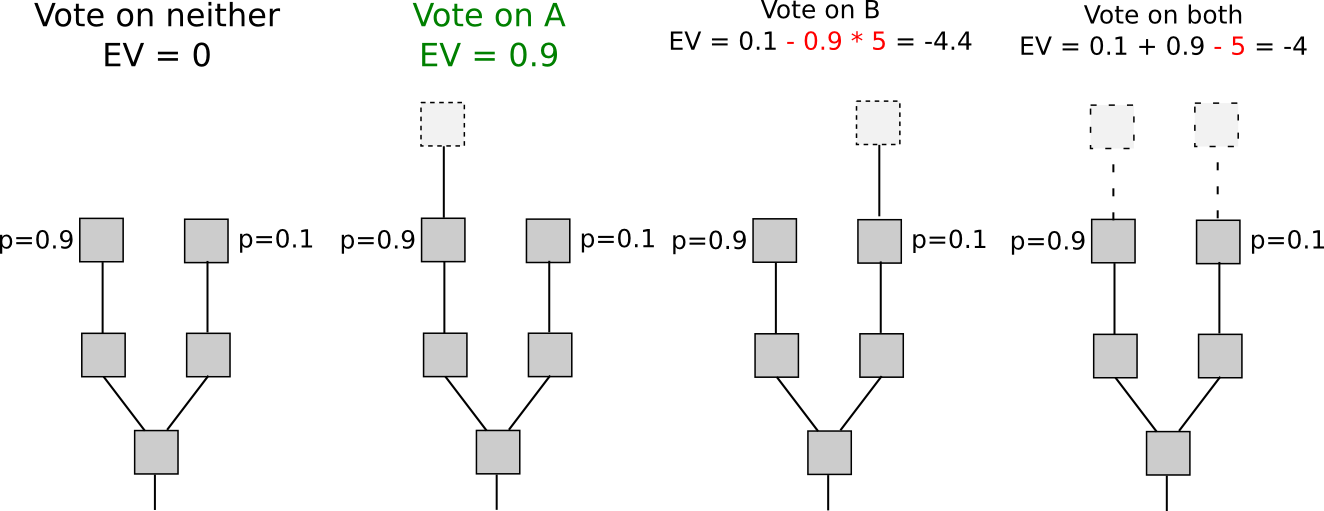

If we focus our consideration particularly on short-range forks – forks lasting lower than some variety of blocks, maybe 3000, then there really is an answer to the nothing at stake drawback: safety deposits. As a way to be eligible to obtain a reward for voting on a block, the consumer should put down a safety deposit, and if the consumer is caught both voting on a number of forks then a proof of that transaction will be put into the unique chain, taking the reward away. Therefore, voting for less than a single fork as soon as once more turns into the dominant technique.

One other set of methods, known as “Slasher 2.0” (in distinction to Slasher 1.0, the unique safety deposit-based proof of stake algorithm), entails merely penalizing voters that vote on the incorrect fork, not voters that double-vote. This makes evaluation considerably less complicated, because it removes the necessity to pre-select voters many blocks upfront to forestall probabilistic double-voting methods, though it does have the price that customers could also be unwilling to signal something if there are two alternate options of a block at a given peak. If we need to give customers the choice to register such circumstances, a variant of logarithmic scoring guidelines can be utilized (see right here for extra detailed investigation). For the needs of this dialogue, Slasher 1.0 and Slasher 2.0 have an identical properties.

The explanation why this solely works for short-range forks is easy: the consumer has to have the proper to withdraw the safety deposit finally, and as soon as the deposit is withdrawn there isn’t a longer any incentive to not vote on a long-range fork beginning far again in time utilizing these cash. One class of methods that try and take care of that is making the deposit everlasting, however these approaches have an issue of their very own: except the worth of a coin continually grows in order to repeatedly admit new signers, the consensus set finally ends up ossifying right into a form of everlasting the Aristocracy. On condition that one of many most important ideological grievances that has led to cryptocurrency’s recognition is exactly the truth that centralization tends to ossify into nobilities that retain everlasting energy, copying such a property will possible be unacceptable to most customers, not less than for blockchains that are supposed to be everlasting. A the Aristocracy mannequin might be exactly the right method for special-purpose ephemeral blockchains that are supposed to die rapidly (eg. one may think such a blockchain present for a spherical of a blockchain-based recreation).

One class of approaches at fixing the issue is to mix the Slasher mechanism described above for short-range forks with a backup, transactions-as-proof-of-stake, for lengthy vary forks. TaPoS primarily works by counting transaction charges as a part of a block’s “rating” (and requiring each transaction to incorporate some bytes of a current block hash to make transactions not trivially transferable), the speculation being {that a} profitable assault fork should spend a big amount of charges catching up. Nonetheless, this hybrid method has a elementary flaw: if we assume that the likelihood of an assault succeeding is near-zero, then each signer has an incentive to supply a service of re-signing all of their transactions onto a brand new blockchain in trade for a small charge; therefore, a zero likelihood of assaults succeeding just isn’t game-theoretically secure. Does each consumer organising their very own node.js webapp to simply accept bribes sound unrealistic? Effectively, in that case, there is a a lot simpler means of doing it: promote outdated, no-longer-used, personal keys on the black market. Even with out black markets, a proof of stake system would ceaselessly be below the specter of the people that initially participated within the pre-sale and had a share of genesis block issuance finally discovering one another and coming collectively to launch a fork.

Due to all of the arguments above, we will safely conclude that this risk of an attacker build up a fork from arbitrarily lengthy vary is sadly elementary, and in all non-degenerate implementations the problem is deadly to a proof of stake algorithm’s success within the proof of labor safety mannequin. Nonetheless, we will get round this elementary barrier with a slight, however however elementary, change within the safety mannequin.

Weak Subjectivity

Though there are lots of methods to categorize consensus algorithms, the division that we are going to deal with for the remainder of this dialogue is the next. First, we’ll present the 2 most typical paradigms at the moment:

- Goal: a brand new node coming onto the community with no data besides (i) the protocol definition and (ii) the set of all blocks and different “essential” messages which were printed can independently come to the very same conclusion as the remainder of the community on the present state.

- Subjective: the system has secure states the place totally different nodes come to totally different conclusions, and a considerable amount of social data (ie. repute) is required in an effort to take part.

Methods that use social networks as their consensus set (eg. Ripple) are all essentially subjective; a brand new node that is aware of nothing however the protocol and the info will be satisfied by an attacker that their 100000 nodes are reliable, and with out repute there isn’t a strategy to take care of that assault. Proof of labor, then again, is goal: the present state is all the time the state that comprises the very best anticipated quantity of proof of labor.

Now, for proof of stake, we’ll add a 3rd paradigm:

- Weakly subjective: a brand new node coming onto the community with no data besides (i) the protocol definition, (ii) the set of all blocks and different “essential” messages which were printed and (iii) a state from lower than N blocks in the past that’s recognized to be legitimate can independently come to the very same conclusion as the remainder of the community on the present state, except there may be an attacker that completely has greater than X p.c management over the consensus set.

Below this mannequin, we will clearly see how proof of stake works completely tremendous: we merely forbid nodes from reverting greater than N blocks, and set N to be the safety deposit size. That’s to say, if state S has been legitimate and has turn out to be an ancestor of not less than N legitimate states, then from that time on no state S’ which isn’t a descendant of S will be legitimate. Lengthy-range assaults are not an issue, for the trivial cause that now we have merely mentioned that long-range forks are invalid as a part of the protocol definition. This rule clearly is weakly subjective, with the added bonus that X = 100% (ie. no assault could cause everlasting disruption except it lasts greater than N blocks).

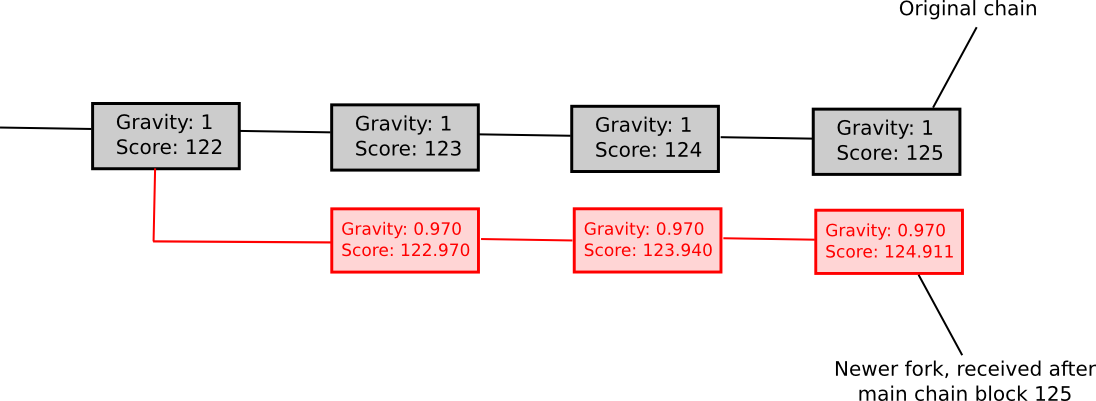

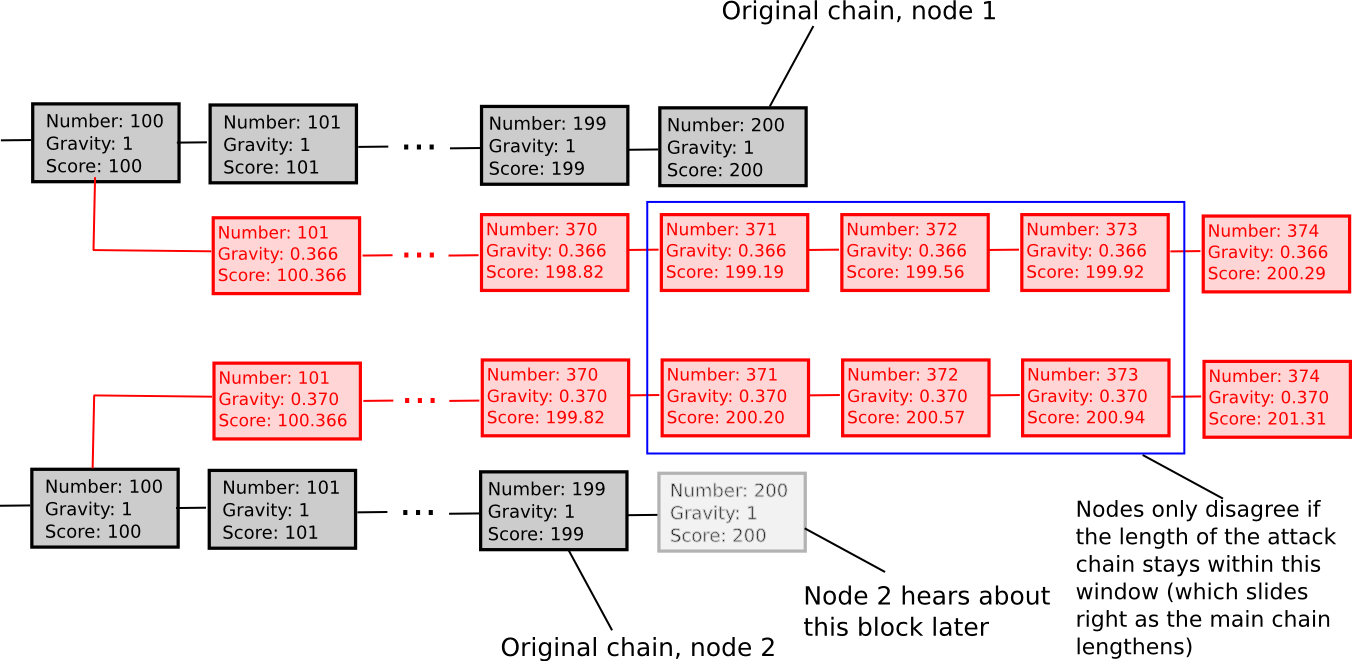

One other weakly subjective scoring methodology is exponential subjective scoring, outlined as follows:

- Each state S maintains a “rating” and a “gravity”

- rating(genesis) = 0, gravity(genesis) = 1

- rating(block) = rating(block.mum or dad) + weight(block) * gravity(block.mum or dad), the place weight(block) is normally 1, although extra superior weight features will also be used (eg. in Bitcoin, weight(block) = block.issue can work nicely)

- If a node sees a brand new block B’ with B as mum or dad, then if n is the size of the longest chain of descendants from B at the moment, gravity(B’) = gravity(B) * 0.99 ^ n (observe that values aside from 0.99 will also be used).

Basically, we explicitly penalize forks that come later. ESS has the property that, not like extra naive approaches at subjectivity, it principally avoids everlasting community splits; if the time between the primary node on the community listening to about block B and the final node on the community listening to about block B is an interval of okay blocks, then a fork is unsustainable except the lengths of the 2 forks stay ceaselessly inside roughly okay p.c of one another (if that’s the case, then the differing gravities of the forks will be sure that half of the community will ceaselessly see one fork as higher-scoring and the opposite half will assist the opposite fork). Therefore, ESS is weakly subjective with X roughly equivalent to how near a 50/50 community cut up the attacker can create (eg. if the attacker can create a 70/30 cut up, then X = 0.29).

Typically, the “max revert N blocks” rule is superior and fewer advanced, however ESS could show to make extra sense in conditions the place customers are tremendous with excessive levels of subjectivity (ie. N being small) in trade for a fast ascent to very excessive levels of safety (ie. resistant to a 99% assault after N blocks).

Penalties

So what would a world powered by weakly subjective consensus appear like? To start with, nodes which might be all the time on-line could be tremendous; in these instances weak subjectivity is by definition equal to objectivity. Nodes that pop on-line on occasion, or not less than as soon as each N blocks, would even be tremendous, as a result of they’d be capable to continually get an up to date state of the community. Nonetheless, new nodes becoming a member of the community, and nodes that seem on-line after a really very long time, wouldn’t have the consensus algorithm reliably defending them. Happily, for them, the answer is easy: the primary time they join, and each time they keep offline for a really very very long time, they want solely get a current block hash from a good friend, a blockchain explorer, or just their software program supplier, and paste it into their blockchain shopper as a “checkpoint”. They may then be capable to securely replace their view of the present state from there.

This safety assumption, the concept of “getting a block hash from a good friend”, could appear unrigorous to many; Bitcoin builders typically make the purpose that if the answer to long-range assaults is a few different deciding mechanism X, then the safety of the blockchain in the end is dependent upon X, and so the algorithm is in actuality no safer than utilizing X straight – implying that almost all X, together with our social-consensus-driven method, are insecure.

Nonetheless, this logic ignores why consensus algorithms exist within the first place. Consensus is a social course of, and human beings are pretty good at partaking in consensus on our personal with none assist from algorithms; maybe the perfect instance is the Rai stones, the place a tribe in Yap primarily maintained a blockchain recording adjustments to the possession of stones (used as a Bitcoin-like zero-intrinsic-value asset) as a part of its collective reminiscence. The explanation why consensus algorithms are wanted is, fairly merely, as a result of people don’t have infinite computational energy, and like to depend on software program brokers to take care of consensus for us. Software program brokers are very sensible, within the sense that they will preserve consensus on extraordinarily massive states with extraordinarily advanced rulesets with excellent precision, however they’re additionally very ignorant, within the sense that they’ve little or no social data, and the problem of consensus algorithms is that of making an algorithm that requires as little enter of social data as doable.

Weak subjectivity is precisely the right answer. It solves the long-range issues with proof of stake by counting on human-driven social data, however leaves to a consensus algorithm the position of accelerating the pace of consensus from many weeks to 12 seconds and of permitting using extremely advanced rulesets and a big state. The position of human-driven consensus is relegated to sustaining consensus on block hashes over lengthy intervals of time, one thing which individuals are completely good at. A hypothetical oppressive authorities which is highly effective sufficient to truly trigger confusion over the true worth of a block hash from one 12 months in the past would even be highly effective sufficient to overpower any proof of labor algorithm, or trigger confusion concerning the guidelines of blockchain protocol.

Be aware that we don’t want to repair N; theoretically, we will provide you with an algorithm that enables customers to maintain their deposits locked down for longer than N blocks, and customers can then benefit from these deposits to get a way more fine-grained studying of their safety degree. For instance, if a consumer has not logged in since T blocks in the past, and 23% of deposits have time period size larger than T, then the consumer can provide you with their very own subjective scoring perform that ignores signatures with newer deposits, and thereby be safe in opposition to assaults with as much as 11.5% of complete stake. An growing rate of interest curve can be utilized to incentivize longer-term deposits over shorter ones, or for simplicity we will simply depend on altruism-prime.

Marginal Price: The Different Objection

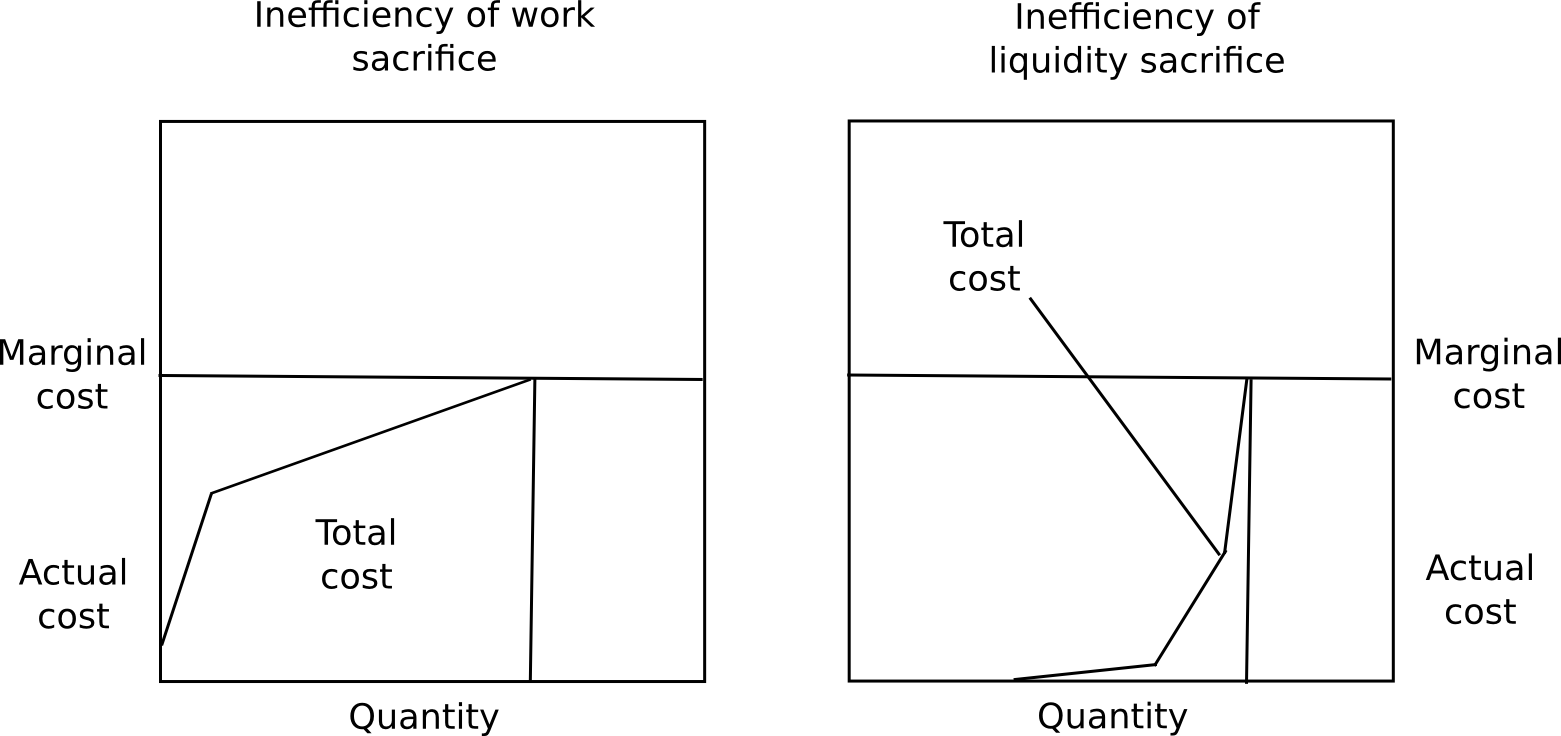

One objection to long-term deposits is that it incentivizes customers to maintain their capital locked up, which is inefficient, the very same drawback as proof of labor. Nonetheless, there are 4 counterpoints to this.

First, marginal value just isn’t complete value, and the ratio of complete value divided by marginal value is way much less for proof of stake than proof of labor. A consumer will possible expertise near no ache from locking up 50% of their capital for just a few months, a slight quantity of ache from locking up 70%, however would discover locking up greater than 85% insupportable with out a big reward. Moreover, totally different customers have very totally different preferences for the way keen they’re to lock up capital. Due to these two elements put collectively, no matter what the equilibrium rate of interest finally ends up being, the overwhelming majority of the capital shall be locked up at far under marginal value.

Second, locking up capital is a personal value, but additionally a public good. The presence of locked up capital means that there’s much less cash provide out there for transactional functions, and so the worth of the forex will improve, redistributing the capital to everybody else, making a social profit. Third, safety deposits are a really protected retailer of worth, so (i) they substitute using cash as a private disaster insurance coverage instrument, and (ii) many customers will be capable to take out loans in the identical forex collateralized by the safety deposit. Lastly, as a result of proof of stake can really take away deposits for misbehaving, and never simply rewards, it’s able to attaining a degree of safety a lot greater than the extent of rewards, whereas within the case of proof of labor the extent of safety can solely equal the extent of rewards. There isn’t any means for a proof of labor protocol to destroy misbehaving miners’ ASICs.

Happily, there’s a strategy to check these assumptions: launch a proof of stake coin with a stake reward of 1%, 2%, 3%, and many others per 12 months, and see simply how massive a proportion of cash turn out to be deposits in every case. Customers is not going to act in opposition to their very own pursuits, so we will merely use the amount of funds spent on consensus as a proxy for the way a lot inefficiency the consensus algorithm introduces; if proof of stake has an affordable degree of safety at a a lot decrease reward degree than proof of labor, then we all know that proof of stake is a extra environment friendly consensus mechanism, and we will use the degrees of participation at totally different reward ranges to get an correct concept of the ratio between complete value and marginal value. Finally, it might take years to get an actual concept of simply how massive the capital lockup prices are.

Altogether, we now know for sure that (i) proof of stake algorithms will be made safe, and weak subjectivity is each ample and needed as a elementary change within the safety mannequin to sidestep nothing-at-stake issues to perform this purpose, and (ii) there are substantial financial causes to consider that proof of stake really is way more economically environment friendly than proof of labor. Proof of stake just isn’t an unknown; the previous six months of formalization and analysis have decided precisely the place the strengths and weaknesses lie, not less than to as massive extent as with proof of labor, the place mining centralization uncertainties could nicely ceaselessly abound. Now, it is merely a matter of standardizing the algorithms, and giving blockchain builders the selection.

from Ethereum – My Blog https://ift.tt/uekb7MF

via IFTTT